The Great Memory Shortage

The Consequences of the AI Buildout Continue

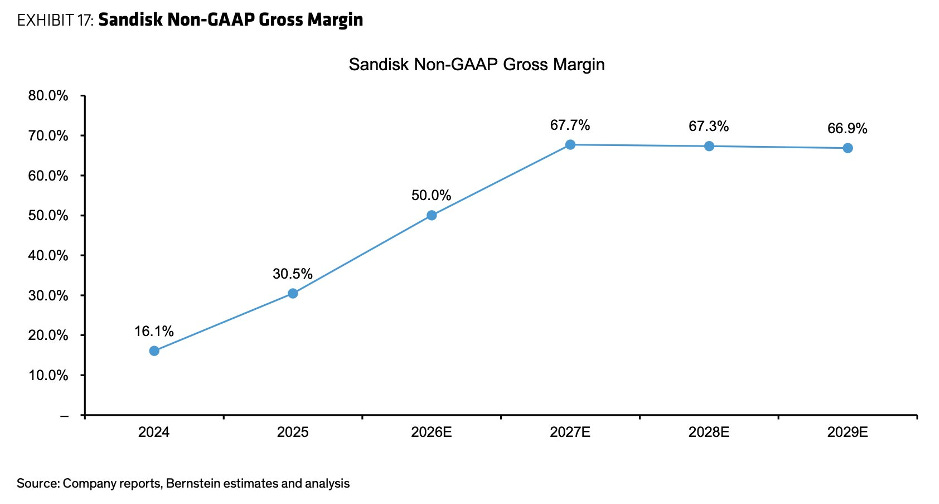

You know something unusual is happening when a company like SanDisk, traditionally viewed by the general public as a stable provider of mature consumer storage products, appreciates more than 1000% in the span of a year with its stock price rising from $38.50 in June 2025 all the way to $453.12 as of the 20th of January 2026. This is not a business traditionally associated with explosive growth, yet its stock has done exactly that. Perhaps most importantly, much of this increase in stock price seems not to have come from only retail investor euphoria or speculation, although it is surely a factor, but rather a big factor is the institutional reassessment of the fundamentals of the business driven by revenue, profit and margin expansion. Gross margins expanded from 22% to over 40% within twelve months, whilst revenue is forecast to surge 42% in fiscal 2026 to $10.45 billion and earnings to skyrocket 350% to $13.46 per share. What’s even more amazing is that some analysts are predicting gross margins to hit around 65% in 2027 and maintain that point for a while. While forward projections are always subject to the chance of being incorrect, especially in dynamic and competitive fields such as AI related hardware, what is undeniable is that it has already been a crazy time for SanDisk and many other companies in the computer memory industry so far. What’s more is that many think there is much more craziness to come, especially as the flow-on effects of this potential memory shortage hit the consumer markets and becomes more tangible to the masses.

So how did a mature and seemingly boring stock suddenly become a $66 billion company seemingly in the blink of an eye?

While there are many factors at play, including SanDisk moving up the stack and entering enterprise and high capacity/high endurance NAND, the most important factor is the overall market’s excessive demand for memory chips and the mismatch of supply leading to companies allocating larger proportions of existing capacity and resources towards the more profitable and higher paying AI customers. When concerns first emerged about the AI infrastructure buildout, much of the conversation centred on energy consumption. Data centres were projected to strain power grids, potentially driving electricity prices skyward. Yet as the AI industry develops, a different bottleneck is becoming painfully clear: memory chips.

The reason for this explosion in demand for memory products is that modern AI LLMs and computer systems require large amounts of data to be moved rapidly between processors and storage. Unlike traditional computing applications, where processing power often represents the primary constraint, many AI workloads are fundamentally restricted by memory these days. Training a frontier model involves moving terabytes of parameters across countless iterations, whilst inference demands rapid access to billions of weights simultaneously. On top of that, bandwidth is equally critical. A processor can only work as fast as it can access the data it needs and as a result, High Bandwidth Memory (HBM) has emerged as the gold standard for AI applications because it can deliver vastly more data per second than conventional memory technologies. The market for advanced memory chips is remarkably concentrated amongst a handful of manufacturers with the main three being Samsung, SK Hynix and Micron. They traditionally supplied multiple lines of memory chips from high-end to lower-end, albeit to varying degrees, but as the demand for HBM and other high-end memory products from AI companies has brought more revenue and higher margins (profit margins are roughly 60–70%, compared to much thinner margins for consumer RAM), they are focusing their factories, engineers and resources into producing high ticket products and neglecting lower-end and consumer products. SK Hynix has reportedly shifted almost all their advanced cleanroom space to HBM and server-grade DDR5 and while they haven’t officially quit the consumer market, their supply for standard PC RAM has plummeted, contributing to the massive price spikes in the retail market. Micron has taken the most drastic step. In late 2025/early 2026, they made the high-profile decision to exit several consumer chip lines (including their famous Crucial brand for certain retail segments) to focus almost entirely on the enterprise Since Samsung makes final products (phones, tablets, PCs) they are the only player still trying to maintain multiple lines, producing higher and lower end memory products, but even they are struggling to keep up. They have had to divert massive amounts of production capacity to keep pace in the HBM markets, which has tightened the supply of their consumer SSDs and even though they are committed to the production of these lower-end products, they recently rejected long-term contracts for standard DRAM, choosing instead to raise prices by up to 70% to match the market’s scarcity. As these manufacturers pivot aggressively towards high-margin AI memory products, a predictable consequence is emerging, shortages in the commodity memory that powers everyday devices.

Furthermore, traditionally, RAM serves as a computer’s high-speed workspace while NAND flash provide permanent storage. However, AI’s massive data requirements have blurred this distinction as NAND is now being re-engineered to function more like active memory, since keeping everything in expensive RAM would be economically unfeasible. This has triggered a supply crisis since manufacturers are reallocating production capacity towards high-margin, AI data centre memory products (enterprise DRAM, HBM, and performance NAND), leaving consumer markets with tighter supply. The result is rising prices across the board because AI infrastructure is absorbing the manufacturing capacity that would otherwise serve everyday PC builders and upgraders. In other words, all the memory makers are allocating more to AI data centre products, and the storage makers are also allocating more to AI data centre products due to the blurring of memory and storage, and what you are left with is a situation where there is vast demand and supply can barely keep up for the AI data centres, while there is less supply available right now for non-AI customers as they tend to pay less. Furthermore, unless inference efficiency increases in multitudes relative to how much energy the AI models consume, there is still a massive projected build out of AI data centres to occur in the coming years, and if that is the case, the current projections have memory chips being one of the main bottlenecks in the expansion. To give some context, some research estimate that the industry is attempting to add nearly 100GW of new capacity between 2026 and 2030 to meet the explosion in generative AI demand, while a Macquarie research report has projected the current supply capacity of the three major memory makers (Samsung, SK Hynix, and Micron) is only adequate to meet the build-out of about 15GW of AI data centres over the next two years. Thus, even with new capital investment into new manufacturing capacity for storage and memory products which will take years to be functional, much of it may still be reserved for AI customers and have relatively limited supply for other customers without paying much higher prices. Industry analysts project DRAM prices could surge by 30-50% over the coming year, with NAND flash following a similar trajectory. On a side note, Nvidia, TPUs and other chipmaking start-ups are already hitting multitudes increases in efficiency for inference in new generations but this increase has to be relatively more than what models consume for there to be a reduction in net energy required and thus a lower build out demand for these chips.

So, is everyone going to be feeling constant the effects on their budget buying technological products over the next few years? The answer is probably yes, but not all buyers will be affected equally. Companies with sufficient scale and foresight are moving aggressively to lock in supply at fixed prices. Apple, with its massive purchasing power and sophisticated supply chain management, has reportedly secured long-term agreements for significant portions of its NAND requirements. Their ability to commit to enormous volumes years in advance gives them leverage that smaller manufacturers can’t replicate. Yet even Apple cannot insulate itself entirely. Whilst they may have hedged their NAND exposure to a degree, reports suggest portions of their DRAM requirements remain uncontracted and exposed to spot market pricing.

For consumers products, memory chips represent a substantial portion of the bill of materials for virtually every electronic device. A high-end smartphone can incorporate between US$100 and US$150 worth of memory and storage components. Laptops and professional workstations can exceed this, often incorporating US$200 to US$350 in memory-related costs alone. That $1000 smartphone may become a $1100 smartphone. The $1500 laptop may stretch towards $1600 laptop. Gaming consoles, tablets, smart televisions, automotive electronics all of them will probably feel the pull of rising memory costs. The cumulative impact across a household’s technology purchases could easily reach hundreds to thousands of dollars annually.

Standard economics suggests that such large profit margins should attract new supply and memory manufacturing is indeed cyclical, with periods of shortage driving investment that eventually creates gluts, which then lead to underinvestment and subsequent shortages. The current dynamics look like it fits this pattern as manufacturers are responding with capacity expansion plans. SK Hynix has announced multibillion-dollar investments in HBM production facilities. Micron is breaking ground on new fabrication plants in the United States, subsidised partially by government semiconductor initiatives. Samsung continues to expand its memory capacity. And many more other players are entering or expanding existing operations. These investments should, in theory, eventually rebalance supply and demand. However, it is not as simple as it seems, and the amount of risk involved in expanding capacity may bring about more cautious and slow approach from these companies. Building a modern memory fabrication facility requires three to four years from ground-breaking to volume production. The capital requirements are staggering with a single advanced megafab now costing between $15 billion and $20 billion, and some next-generation facilities projected to exceed $30 billion or even $100 billion for multi-fab complexes. These extreme prices create understandable hesitation and fear of overbuilding. A manufacturer committing to such investments today must predict the market landscape of 2028 or 2029, a timeframe that is near impossible to confidently forecast in the rapidly evolving world of AI technology. Memory chipmakers are wary of repeating previous cycles where aggressive overbuilding led to a global oversupply and a subsequent collapse in prices. This potential disciplined approach may lead to a slower rebalancing of supply meeting demand. Furthermore, legacy or lower-margin products, such as the standard memory found in consumer electronics and home appliances, may fail to receive adequate investment or manufacturing attention due to previously mentioned factors, leaving those products vulnerable to sustained shortages and price instability. However, the current shortage will likely not prove catastrophic or permanent. Market mechanisms do work, albeit with lags. The extraordinary margins available in memory production will attract capital, encourage capacity expansion, and eventually rebalance supply. New entrants may emerge, though the technical and capital barriers to memory manufacturing are formidable. Existing manufacturers will, despite their caution, ultimately expand capacity because the profit opportunity is too substantial to ignore entirely. But the time lags will potentially be painful and draw uproar.

Ultimately, what we’re seeing is the price of the AI buildout in a world of limited resources. Silicon fabrication capacity, cleanroom facilities, advanced lithography equipment, skilled engineers all exist in finite quantities. When markets decides to pursue a particular technological direction with intensity, the resource are allocated in that direction. Markets, both free and interventionist, have rendered their verdict for now: AI development is sufficiently important to warrant enormous resource allocation. Through a combination of venture capital enthusiasm, corporate strategic investments, and government industrial policy, hundreds of billions of dollars are being directed towards AI infrastructure. This capital represents a claim on real resources that make cutting-edge semiconductors possible.

When AI infrastructure makes a larger claim on these resources, other claimants necessarily receive less relative to the overall pie. The consumer buying a laptop, the small manufacturer producing IoT devices, the automotive company building electric vehicles all find themselves competing for resources against deep-pocketed hyperscalers and firms building AI data centres. We can and, in all likelihood, will increase overall capacity and expand the pie allowing for increased production and supply across the board, but it will take time, and those who have less purchasing power will still receive relatively less than those with more.

Predicting the future economic landscape of the memory market is a difficult task due to the volatile nature of technological progression and shifting market requirements. While current trends suggest a sustained demand for HBM and expanded data centre infrastructure will continue to push tight supply conditions for memory and storage chips, these trajectories are subject to sudden shifts in end-user demand or a potential cooling of AI investment. Technological shifts also present significant variables. The further development and adoption of new types of specialised inference chips or more efficient hardware architectures could fundamentally reduce the physical footprint and memory capacity required to sustain complex computations. Furthermore, advancements in software efficiency and algorithmic optimisation may allow for sophisticated processing with significantly lower hardware overhead than is currently anticipated. Consequently, while current trends point to the great memory shortage, the inherent unpredictability of innovation means that existing paradigms should be viewed as subject to change at any moment.

Whether you agree with this buildout or not, and whether these investments bring about the benefits and value to society as envisioned are important and genuine questions that need to be answered. However, for now, regardless of your position, it is clear that there is already a great memory shortage on our hands and one that may only exacerbate.

*Disclaimer: This information is for general informational purposes only and does not constitute financial, investment, or professional advice. The author may hold positions in the assets or companies discussed.

Bonnefin Research is a free publication focused on better investment thinking. Subscribe and share to support the work, and join the discussion in the comments as we continue building this community.